Making Looking for Nigel - our tips for creating audio AR experiences8

This is the second blog post on our work developing an audio augmented reality experience. The first part took you through how we built the experience and the world within it. In this installment we'll show you some scenarios we tested and how we put together the sound environment - as well as some recommendations for anyone interested in creating their own audio AR experiences, which you can find at the end of this post. You'll also find out what happened to our prototype too...

Evasive Nigel

While Rosie was crafting the narrative, we continued to improve upon the fluidity of the interactions.

Vitally, we needed to set the challenge of spatial sound location at the right level. Guided only by their ears, players would need to walk around to find the virtual locus of various sounds - much harder than it might seem when there are no visual cues.

Moreover, although everyone's head is slightly different, spatial sound technology currently operates on the principle of a “standard” head shape, which introduces varying degrees of inaccuracy when the listener is challenged to locate spatial sounds mapped onto the physical world. Some of our testers even experienced sounds as being “located” on top of surrounding buildings!

We needed to improve this interaction mechanic.

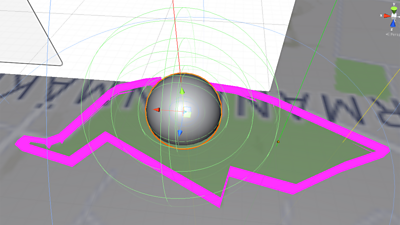

Firstly, we knew we couldn't simply rely on an increase in volume to indicate that the player was moving in the right direction. We needed to add cues: some sort of feedback mechanism layered on top of the spatially-located sound to clearly indicate intermediate successes. We began to wrap our sound objects in several concentric boundaries to test different forms of feedback.

Our three test scenarios:

- The baseline: a single located sound.

- A single located sound with additional noises triggered as the player grew closer, to provide helpful feedback:

- Devised by Rosie: a single located sound with additional layers added to the original sound, like the different parts of a musical arrangement, building up as the player grew closer:

An augmented acoustic environment

One of the most fascinating aspects of augmented reality is the interplay between the virtual and the real. For audio AR, this can be considerably more subtle than visual AR. As the development of Looking for Nigel progressed, we found that the richness of interplay between the environmental acoustic reality and the universe of Nigel was growing in nuance and complexity.

We soon realised that to add texture to the experience, we could use sound more subliminally, in the form of cues. Cues to indicate the scene had shifted to another universe, or that the player's actions were resulting in an observable change of environment.

In the first and most obvious instance, this entailed the creation of a distinctive ambience for each universe. The “Construction” universe, for example, would have a backdrop of humming machinery and whirring gears. The listener would hear this in stereo, without spatialisation, as a thin layer above the base layer.

We enriched this ambience by using the geography of the player's environment - the park - as the stimulus for a generative soundscape. We could place sounds around the park that players could simply stumble into, adding a sense of sandbox exploration and freedom to the experience (taking inspiration from open-ended games like No Man's Sky, Diablo III, Elite or rougelikes). This sense of serendipity would also increase the replay value; you'd be travelling through a slightly different soundscape each time.

To achieve semi-random placement of sound objects on a two-dimensional plane, we used an algorithm known as Poisson disc sampling.

These sound objects were spatialised using Resonance Audio, becoming either fixed positions of sound for the player to pass through (such as bubbling metal in the Construction universe) or unfixed sounds triggered by player movements (such as stumbling into a band kit in an Instrument universe). Hidden in each universe/level was the Easter Egg sound of a party popper.

With our testers, we found that the real and the augmented worlds would sometimes blur. Was that a real plane passing overhead or part of the game?

The final and most important element of our augmented acoustic environment was the dialogue - the player's conversations with our various Nigels, each propelling the narrative forward.

To spatialise the dialogue, we initially experimented with animated game objects and Resonance Audio. Each object represented a character, moving relative to the player's head - our idea was that by spatialising and animating the position of character sound sources, we'd build a convincing illusion of invisible characters occupying the same space as the player.

However, during testing, we found it hard to locate the characters, and the synthetically-placed sound didn't contain enough spatial cues to create the illusion of co-presence. Ultimately, we settled on traditional binaural recordings. These would permit greater control of the actor's perceived position while allowing our actor to physically embody their character as they moved around the dummy mic head.

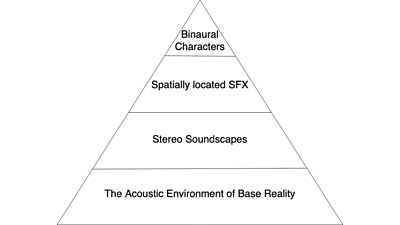

Conceptually, these different audio layers assumed a pyramid-like hierarchy:

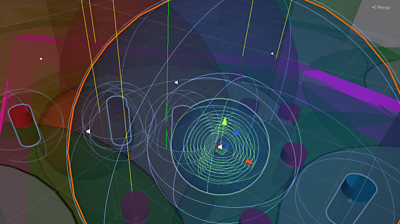

For our final scene, we decided to push our generative soundscape ideas further. The story concept called for a party, thrown by Nigel for all of his alternate-universe counterparts. We mapped out a sound environment with a few distinct areas - a pool party, for instance, or a bar area. Each of these areas would be anchored by one central sound object - a pool, or a bar - and populated by several Nigels wandering around. Players would be free to explore these areas and listen in to the Nigels chatting with one another.

When the player makes it to this end scene, we fill the park with copies of these areas. They are laid out in such a way that they always overlap so that we create the impression of a busy party with no dead areas, and the player doesn't feel lost. Also, no two areas repeat next to each other, which creates the feeling of a variety of environments. The wandering Nigels orbit the centre of their nearest area anchor object at varying distances and speeds.

We got as far as prototyping this scene - it sounds promising, and the next step would be working with Rosie and Nigel to create a whole lot of lines of dialogue to emit from the virtual partygoers.

Talking to Nigel

One of the key interactions during the piece is the conversations you have with the various parallel versions of Nigel. The interaction design for these conversations is a direct descendant of the work we've done on interactive stories for voice devices, most notably The Inspection Chamber for Alexa.

Using a custom-written Unity plugin, we were able to use the built-in speech recognition engine on iOS to listen for user speech, transcribe what was being said, and use the content of that transcription to drive choices in the story.

We didn't quite get to push this as far as we'd have liked. Technical problems getting the speech recogniser to work simultaneously with Resonance Audio meant that we spent a lot of time on the implementation before we could get to play with it as an input method.

Au revoir, Nigel

We got a long way through developing our prototype, with a few iterations of Rosie's script and one studio-recorded and sound-designed version of the experience audio under our belt. We'd implemented a full version of the story and interactions and were gearing up for a soft launch and beta release in time for South by Southwest.

Unfortunately, the project was significantly disrupted by the global coronavirus pandemic. Our travel to SXSW, then the festival itself were sadly cancelled. It quickly became clear that it would be a while before we could safely work in a studio with Nigel, and co-ordinating a geographically dispersed bunch of collaborators suddenly became a significant challenge. Testing a piece which requires extensive wandering around in parks to fully review was tricky in the early weeks of lockdown, especially with our team working in isolation. And then news broke that Bose would no longer be supporting third-party apps for the Frames. Faced with all of this, we placed the project on indefinite hold.

We hope to release the experience one day; Apple has recently announced that AirPods are capable of spatial audio and we'd be interested in attempting a port when details of the SDK become clearer. We still think that wearable audio and audio AR experiences will be part of the tech landscape in the future, although it's tough to make any predictions in 2021!

Recommendations

Even if the final piece hasn't seen the light of day, we hope that by writing up our experiences developing it that other creators will be inspired or otherwise galvanised to take our ideas off in directions of their own. Here are some of our key findings.

- We avoided making a purely technology-led experience. The creative work and concept development pushed our research and development in expansive and unexpected ways. Our prototype began life as a back-and-forth between collaborators; research aims became creative direction, and then our experience design was in response to material improvised from that direction.

- We worked with creatives from parallel or overlapping disciplines. Nigel and Ben have been making immersive theatre for years, and Rosie's experience in embodied storytelling, games and digital placemaking was perfect for this project. Just because we were exploring a new technology, this didn't mean that there weren't people in the world with relevant experience.

- A spatialised sound object in the virtual space around a player's head was not enough of a cue by itself to allow people to navigate their way to that object. We ended up adding a low-pass filter to “muffle” sounds behind a player, exaggerating how this effect occurs naturally with ears and heads in the real world, and using layered sounds as positive feedback to let a player know they were going in the right direction.

- Using spatialised sound objects to play back dialogue between the player and the characters they encounter was not particularly successful, in terms of making a character sound 'there' with you. We had decided to record the next version of our character audio with a binaural dummy head for a better sense of placement; more experimentation is needed with this.

- Using map data to drive an experience only works if the player's phone has a good data connection, which is not always guaranteed. Also, constraining the experience to only run inside the boundaries of a mapped park can result in players being locked out if they're in an open space that isn't marked as a park in that map data.

- Augmented sound objects that exist in the game space cannot move too quickly as people take time to home in on a sound's location. Too fast and it can lead to a disorientating experience where sounds are constantly zipping by without being the user being able to lock in and process them!

- A lot of the work needs to be done in the same (or a similar) sonic environment to that where the experience will take place. The difference between crafting a sound world in a quiet office with headphones on versus listening to that world on an open-backed pair of headphones in a busy park is drastic. We even thought we had bugs that turned out not to exist in software but were a result of these perceptual differences in sound environments.

- People need time to acclimatise to spatial sound if they haven't heard anything similar before. We achieved this through game mechanics and sound design, but it is important not to throw people directly into a 3D soundscape with no warning!

- The difference between people's spatial sound awareness and ability to focus on sound is wide, and any experience needs to take that into account. For instance, we implemented a 'creep' function where the set-piece that players were trying to find also eventually found its way to them if they were having difficulty homing in on its location.

- Tweet This - Share on Facebook

- BBC R&D - Recommendations for Designing Audio Augmented Reality Experiences

- BBC R&D - Designing and Developing Ideas for Audio AR Sunglasses

- BBC R&D - On Our Radar: Audio AR

- BBC R&D - Audio AR: Geolocated Sound

- BBC R&D - Audio AR: Sound Walk Research - The Missing Voice

- BBC R&D - Virtual Reality Sound in The Turning Forest

- BBC R&D - Binaural Sound

- BBC R&D - Spatial Audio

- BBC Academy - Introduction to Spatial Audio

- BBC Academy - Spatial Audio: Where Do I Start?

- BBC R&D - What Do Young People Want From a Radio Player?

- BBC R&D - Prototyping, Hacking and Evaluating New Radio Experiences

- BBC R&D - Better Radio Experiences: Generating Unexpected Ideas by Bringing User Research to Life